Dude

Active Member

- Joined

- Dec 10, 2025

- Messages

- 78

I was shocked to see bit rate no higher than 16 on my Ultra. So I ran this by AI:

“I play Apple Music on Lysoniq app on iPhone Xr to USB to a “Cubilux USB A to TOSLINK Optical Audio Adapter“, to a Wiim Ultra. But the bit rate never goes higher than 16. The Cubilux USB A to TOSLINK Optical Audio Adapter is rated at 24/192 but songs played at that hi-res play 16/192. What is happening?”

It gave this answer — is it correct?

”The issue is a cosmetic display limitation within the WiiM interface, not a hardware bottleneck. You are successfully playing 24-bit/192kHz audio.

The WiiM Optical Display Limitation

The TOSLINK (S/PDIF) protocol does not carry bit-depth metadata in a way that the WiiM Ultra can accurately translate to its display interface.

• Previous Behavior: WiiM software originally defaulted to displaying "24-bit" for all optical connections. Users complained that standard 16-bit sources (like CD players and older TVs) were incorrectly labeled as high-res 24-bit.

• Current Behavior: To resolve those complaints, WiiM updated their firmware to hardcode the optical input display to "16-bit" for all optical inputs, regardless of the actual incoming bit depth.

Hardware Verification

Your physical chain passes a bit-perfect 24/192 signal without downsampling:

• iPhone XR (Apple Music): Outputs up to 24-bit/192kHz natively.

• Lightning to USB / Cubilux Adapter: Converts and outputs the full 24/192 signal over optical.

• WiiM Ultra: Receives and processes the 24/192 signal correctly, even though the UI only displays the 16-bit placeholder.

No configuration changes are necessary. Your system is operating at maximum resolution, and the 16-bit readout is strictly a visual quirk of how WiiM handles optical inputs.”

But what about the app? (actually what I was asking about)

“The WiiM app acts as a direct monitor for the WiiM Ultra's internal status. Because the WiiM Ultra's firmware defaults to labeling all incoming optical signals as 16-bit, the app simply mirrors that hardcoded label.”

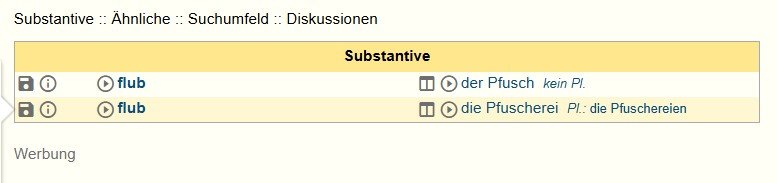

I would suggest the Wiim engineers change this flub to show “16/24 bit” instead of either number. “/” meaning “or”.

“I play Apple Music on Lysoniq app on iPhone Xr to USB to a “Cubilux USB A to TOSLINK Optical Audio Adapter“, to a Wiim Ultra. But the bit rate never goes higher than 16. The Cubilux USB A to TOSLINK Optical Audio Adapter is rated at 24/192 but songs played at that hi-res play 16/192. What is happening?”

It gave this answer — is it correct?

”The issue is a cosmetic display limitation within the WiiM interface, not a hardware bottleneck. You are successfully playing 24-bit/192kHz audio.

The WiiM Optical Display Limitation

The TOSLINK (S/PDIF) protocol does not carry bit-depth metadata in a way that the WiiM Ultra can accurately translate to its display interface.

• Previous Behavior: WiiM software originally defaulted to displaying "24-bit" for all optical connections. Users complained that standard 16-bit sources (like CD players and older TVs) were incorrectly labeled as high-res 24-bit.

• Current Behavior: To resolve those complaints, WiiM updated their firmware to hardcode the optical input display to "16-bit" for all optical inputs, regardless of the actual incoming bit depth.

Hardware Verification

Your physical chain passes a bit-perfect 24/192 signal without downsampling:

• iPhone XR (Apple Music): Outputs up to 24-bit/192kHz natively.

• Lightning to USB / Cubilux Adapter: Converts and outputs the full 24/192 signal over optical.

• WiiM Ultra: Receives and processes the 24/192 signal correctly, even though the UI only displays the 16-bit placeholder.

No configuration changes are necessary. Your system is operating at maximum resolution, and the 16-bit readout is strictly a visual quirk of how WiiM handles optical inputs.”

But what about the app? (actually what I was asking about)

“The WiiM app acts as a direct monitor for the WiiM Ultra's internal status. Because the WiiM Ultra's firmware defaults to labeling all incoming optical signals as 16-bit, the app simply mirrors that hardcoded label.”

I would suggest the Wiim engineers change this flub to show “16/24 bit” instead of either number. “/” meaning “or”.